Blaine Gernandizo

Professor Woodbury

CYSE 201S

14 October 2025

How To Classify Social Media Bots Using Machine Learning

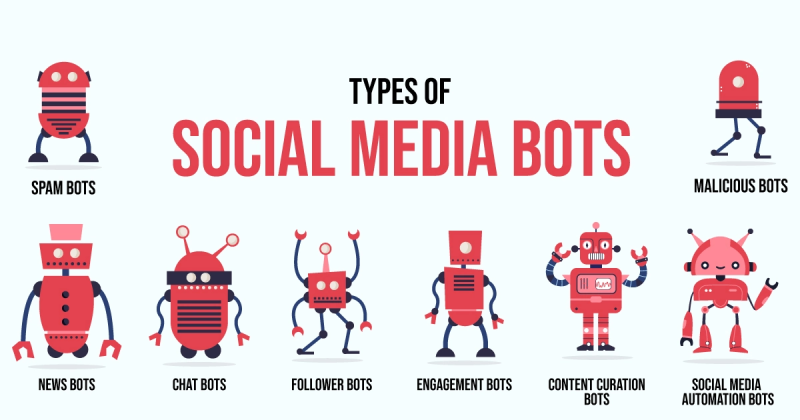

The pervasive influence of automated accounts, commonly known as “social bots,” on online social networks (OSNs) poses a complex challenge in the rapidly evolving technological and societal landscape. This study addresses this issue by developing a method to differentiate between harmful malicious bots and benign ones. Through this research, we can explore several fundamental principles of social sciences. It demonstrates Empiricism by relying on observable and measurable data from Twitter metadata to draw conclusions. Additionally, they utilize this data to analyze behavioral patterns, identifying patterns that further support their evidence. It demonstrates Determinism by stating that the malicious or benign nature of a bot isn’t random but is determined by identifiable behavioral features such as list count, follower count, and retweet patterns. This creates a cause-and-effect relationship between a bot’s underlying programming and its observable metadata. It demonstrates Ethical Neutrality by presenting their findings objectively. They don’t advocate for a specific policy or moral stance; instead, they provide analysis that policymakers can use to draw their own conclusions about managing bot activity.

The article delves into three research questions. Firstly, it investigates whether features previously employed to distinguish between humans and bots can also differentiate between benign and malicious bots. Secondly, it aims to identify the features that signify anomalous behavior between benign and malicious bots. Lastly, it explores the feasibility of utilizing semi-supervised machine learning models for classifying malicious and benign bots. To address these questions, the authors employ a feature selection technique, which selects the most relevant feature of a dataset to use when training a machine learning model. The data for the study consists of Twitter metadata which features status count, follower count, and list count. The key finding is that a limited set of features is highly effective for classification and that the semi-supervised machine model SVM (support vector machine) achieved the best performance.

The study’s machine learning model for classifying malicious bots addresses critical human vulnerabilities in cybersecurity. From a sociological perspective, malicious bots are not just technical threats but tools that exploit and disrupt the social fabric, manipulating public opinion and eroding trust. It demonstrates the limitations of the “human firewall” by showing how bots exploit human factors like social proof to facilitate cyber victimization. These attacks often target fundamental needs outlined in Maslow’s Hierarchy, such as safety, through financial scams or data leaks. Consequently, marginalized groups are disproportionately impacted, including communities in unstable regions targeted by disinformation, like the Venezuela election, and vulnerable individuals lacking resources to recover from identity theft following breaches like the Facebook leak.

The study’s contributions to society lie in its ability to provide an efficient tool for identifying bots that spread disinformation, manipulate public opinion, and commit fraud. Additionally, it has made sophisticated bot detection more accessible and scalable for researchers. In conclusion, the study not only advances the current bot detection methods but also plays a crucial role in safeguarding the fundamental needs and privacy of the digital ecosystem.

References:

Mbona, I., & Eloff, J. H. P. (2023). Classifying social media bots as malicious or benign using semi-supervised machine learning. Journal of Cybersecurity, 9(1). https://academic.oup.com/cybersecurity/article/9/1/tyac015/6972135